Lessons from Molecules: OpenGL ES

I had a great time at the satellite iPhoneDevCamp in Chicago, where I was surprised with the number of well-known (to me, at least) developers who are located in the area. Although the San Francisco outpost may have had more attendees, I'd argue that we had as good a technical and business discussion going. We certainly had better shirts (courtesy of Stand Alone).

Anyway, I was allowed to give a talk on some of the lessons that I'd learned in implementing the 3-D graphics in Molecules using OpenGL ES. It was well-received, so I figured that I should create a written guide based on my talk.

First, before I get into the details of the post, I'd like to indicate that nothing I say here should be in violation of the still-in-place NDA that Apple has required iPhone developers to sign. OpenGL ES is an open standard, all benchmarks that I point to are either publicly available or were generated by an application that is currently in the App Store, and I will not make any reference to Apple's specific APIs. Molecules is nominally open source, but I cannot share the source code publicly until the NDA is lifted.

Also, I will refer to the iPhone throughout this post, but the iPod Touch has the same graphical hardware within it, so all lessons here will apply to that class of device as well.

3-D hardware acceleration on the iPhone

The Samsung SoC also features an implementation of Imagination Technologiesâ PowerVR MBX Lite 3D accelerator, ... This fourth-generation PowerVR chipset is basically an evolution of the second-generation graphics hardware used in the Sega Dreamcast...

"Under the Hood: The iPhoneâs Gaming Mettle" \- Touch Arcade

Before we get into the software side of 3-D rendering on the iPhone, it helps to know what we can expect from the platform. This has been the cause of some debate lately, so I wanted to gather some hard numbers of my own and compare them with other benchmarks out there. Specifically, I wanted to see how many triangles per second the iPhone is capable of pushing, to give an idea as to the kind of complex 3-D geometry and frame rates it can support.

I used the current version of Molecules that's in the App Store (with a few small tweaks) to perform these tests. I loaded molecules of varying complexity (and thus, different numbers of triangles required to render) and measured the time it took to render 100 consecutive frames of rotation for these molecules. From that, I obtained the frame rate.

| Triangles in model | Frames per second | Triangles per second | 9,720 | 27.81 | 270,334 |

| 14,072 | 16.90 | 238,179 | |||

| 22,540 | 9.95 | 224,211 | |||

| 31,000 | 7.50 | 232,612 | |||

| 36,416 | 6.44 | 234,691 | |||

| 48,856 | 5.61 | 274,278 | |||

| 86,480 | 2.73 | 235,772 | |||

| 122,896 | 1.90 | 233,276 |

The results show a throughput of 243,000 triangles per second, with a variability of about 7.7%. GLBenchmark has published OpenGL ES benchmarks on the iPhone in a variety of conditions. They seem to get a higher throughput under these conditions (no textures, smooth shaded, one spotlight) of 470,957 triangles per second. I don't know the exact mechanism of their benchmark, but the difference is most likely due to suboptimal code in my application.

These numbers need some context. I've tabulated the pure rendering performance statistics that I could find for other devices of interest:

| Device | Max triangles per second | iPhone [1] | 613,918 |

---|--- Nokia N95 [2] | 719,206 Nintendo DS [3] | 120,000 Sony PSP [4] | 33,000,000

[1] http://www.glbenchmark.com/phonedetails.jsp?benchmark=pro&D=Apple%20iPhone&testgroup=lowlevel

[2] http://www.glbenchmark.com/phonedetails.jsp?benchmark=pro&D=Nokia%20N95&testgroup=lowlevel

[3]

[4]

I used the highest numbers I could find for each device, and real-world performance could be much lower, but I think the numbers tell a clear story. The highest ranked cell phone in GLBenchmark's tests was the Nokia N95, but it only holds a slight edge over the iPhone in pure performance. The bestselling portable gaming platform at the moment is the Nintendo DS, and the iPhone is clearly ahead of that, with up to five times the rendering performance. The Sony PSP figures are based on Sony's press numbers, and Sony is well-known for overstating the performance of their products, but even if real-world performance is only 20% of this it still comes out way ahead of the iPhone. If anyone in the PSP homebrew community has more reliable benchmark numbers to share, I'd be glad to post them.

Overall, the iPhone is a very capable device in terms of 3-D performance, even if it isn't the best of all portable devices. It does have a more powerful general purpose processor than the PSP (a 412 MHz ARM CPU vs. a 222 - 333 MHz MIPS CPU), which could lead to better AI or physics. It's important to remember that, outside of the homebrew community, very few small developers will ever have a chance to make something on the DS or PSP, while the iPhone SDK and App Store are open to all. Finally, the ease of programming for the iPhone far exceeds other mobile devices. For example, I wrote Molecules in the span of about three weeks, only working on it during nights and weekends.

Introduction to OpenGL ES

Now that we know what the 3-D hardware acceleration capabilities of the iPhone are, how do we go about programming the device? The iPhone uses the open standard OpenGL ES as the basis for its 3-D graphics. OpenGL ES is a version of the OpenGL standard optimized for mobile devices. OpenGL is a C-style procedural API that uses a state machine to allow you to control the graphics processor. That sounds confusing, but the gist of it is that OpenGL uses a small set of C-style functions to change to a certain mode or state, such as setting up lighting, or configuring the camera, or drawing the 3-D objects, and perform work in that state. For example, you can enable the lighting state via

glEnable(GL_LIGHTING);

and then send commands to configure the lighting. When you are ready to set up geometry, you change to that state and send the commands needed for that.

OpenGL seems incredibly complex, and it's natural to want to throw up your hands and walk away, but I urge you to just spend a little more time with it. Once you get over the initial conceptual hurdles, you'll find that there's a reasonably simple command set involved. I was a complete newbie to OpenGL two months ago, and now I have a functional application based on it.

OpenGL has been around for a while and has accumulated a number of ways of doing the same tasks, many of them less efficient than others, so the standards committee took the opportunity with OpenGL ES to start fresh and clean out a lot of the cruft. In fact, OpenGL 2.0 will actually be based on OpenGL ES. I won't get too far into what has changed for those familiar with OpenGL, but I'll just list a few things that I came across. First, OpenGL ES drops support for immediate mode. What this means is that the following code:

glBegin(GL_TRIANGLE_STRIP);

glColor4f(1.0f, 1.0f, 1.0f, 10.f);

glVertex3f(0.0f, 0.0f, 0.0f);

glVertex3f(1.0f, 0.0f, 0.0f);

glColor4f(1.0f, 1.0f, 1.0f, 10.f);

glVertex3f(0.0f, 0.0f, 0.0f);

glVertex3f(1.0f, 0.0f, 0.0f);

glEnd();

will not work in OpenGL ES. Many of the OpenGL examples you find online use immediate mode, and will need to be updated before they can work in OpenGL ES. Second, you can only draw triangles directly, not other polygons. Because any polygon can be made using triangles, this was seen as a redundancy. Third, OpenGL ES supports fixed-point numbers, which helps for performance on non-desktop devices that lack floating-point processing power. Finally, OpenGL ES supports smaller data types for geometry data, which can save memory, but can also cause problems. For example, unsigned shorts are the largest index data types you can use, which limits you to addressing only 65,536 vertices in one vertex array. This caused problems for me on some larger molecules until I started using multiple vertex arrays.

For more on OpenGL ES, I'd highly recommend the book "Mobile 3D Graphics: with OpenGL ES and M3G" by K. Pulli, T. Aarnio, V. Miettinen, K. Roimela, and J. Vaarala. Also, Dr. Dobb's Journal has a couple of excellent articles on OpenGL ES: "OpenGL and Mobile Devices" and "OpenGL and Mobile Devices: Round 2". The latter focuses on iPhone development and contains material under NDA that I will not discuss here.

With the introductions over, I'd like to highlight some specific things that I've learned during the design of Molecules.

Simulating a sphere using an icosahedron

In Molecules, each atom of a molecule's structure is represented by a sphere. I tried out a number of different ways of representing a sphere in OpenGL before I arrived at the approach I use now. I currently draw an icosahedron, a 20-sided polyhedron, as a low-triangle-count stand-in for a sphere. The code to set up an icosahedron's vertex and index arrays is as follows:

#define X .525731112119133606

#define Z .850650808352039932

static GLfloat vdata[12][3] =

{

{-X, 0.0, Z}, {X, 0.0, Z}, {-X, 0.0, -Z}, {X, 0.0, -Z},

{0.0, Z, X}, {0.0, Z, -X}, {0.0, -Z, X}, {0.0, -Z, -X},

{Z, X, 0.0}, {-Z, X, 0.0}, {Z, -X, 0.0}, {-Z, -X, 0.0}

};

static GLushort tindices[20][3] =

{

{0,4,1}, {0,9,4}, {9,5,4}, {4,5,8}, {4,8,1},

{8,10,1}, {8,3,10}, {5,3,8}, {5,2,3}, {2,7,3},

{7,10,3}, {7,6,10}, {7,11,6}, {11,0,6}, {0,1,6},

{6,1,10}, {9,0,11}, {9,11,2}, {9,2,5}, {7,2,11}

};

This code was drawn from the OpenGL Programming Guide, also known as the Red Book.

I should define the terms vertex and index for those new to OpenGL. A vertex is a point in 3-D space of the form (x,y,z). An index is a number that refers to a specific vertex contained within an array. You can think of this in terms of connect-the-dots, where the dots are vertices and the numbers that tell you what to connect to what are the indices. In the above example, you see triplets of indices that specify one 3-D triangle each. The array of vertices and array of indices combine to define the 3-D structure of your objects. Arrays of color values and lighting normals complete the geometry.

Speaking of lighting normals, I was able to squeeze a little more out of the icosahedrons and make them a little closer to spheres by turning on smooth shading using

glShadeModel(GL_SMOOTH);

and setting the normals for the model to point to the vertices. If the normals had been pointed toward the faces of the triangles, the edges of the polyhedron would be sharp and the object would be shaded like its geometry would seem to indicate. By pointing the lighting normals to the vertices, the object was shaded like a sphere and the faces were smoothed.

Improving rendering performance using vertex buffer objects

As mentioned above, OpenGL has been around for a while and has accumulated many ways of performing the same tasks, such as drawing. Under normal OpenGL, there are at least three ways of drawing 3-D geometry to the screen:

- Immediate mode

- Vertex arrays

- Vertex buffer objects

Of these three, immediate mode support has been dropped in OpenGL ES (see code sample above). That leaves vertex arrays and vertex buffer objects.

Vertex arrays consists of what you'd expect: arrays of vertices, indices, normals, and colors that are written to the graphics chip every frame. They are reasonably straightforward to work with, and were the first thing I tried when implementing Molecules. To draw an icosahedron based on the above code, you'd use something like the following:

glEnableClientState(GL_VERTEX_ARRAY);

glVertexPointer(3, GL_FLOAT, 0, vdata);

glDrawElements(GL_TRIANGLES, 60, GL_UNSIGNED_SHORT, tindices);

You first tell OpenGL to use the array of vertices (vdata) that was set up before, then draw triangles based on the array of indices (tindices).

I was using this to draw icosahedrons, which I was then translating to different locations using the glTranslatef() function. The per-frame rendering performance was not very good, which I figured was due to the multiple glDrawElements() and glTranslatef() calls, so I calculated all the geometry ahead of time and just did one large glDrawElements() call. I still wasn't happy with the performance, so I took a look at my program in Instruments and found that most of its time was being spent copying data to the GPU.

This is where vertex buffer objects come into play. Vertex buffer objects (VBOs) are a relatively new addition to the OpenGL specification that provide a means of sending geometry data to the GPU once and having it be cached in video RAM. The bus between the CPU and GPU becomes a severe bottleneck, and VBOs reduce or eliminate that bottleneck.

Setting up a VBO requires code similar to the following (extracted from the current version of Molecules):

m_vertexBufferHandle = (GLuint *) malloc(sizeof(GLuint) * m_numberOfVertexBuffers);

m_indexBufferHandle = (GLuint *) malloc(sizeof(GLuint) * m_numberOfVertexBuffers);

m_numberOfIndicesForBuffers = (unsigned int *) malloc(sizeof(unsigned int) * m_numberOfVertexBuffers);

unsigned int bufferIndex;

for (bufferIndex = 0; bufferIndex < m_numberOfVertexBuffers; bufferIndex++)

{

glGenBuffers(1, &m_indexBufferHandle[bufferIndex]);

glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, m_indexBufferHandle[bufferIndex]);

NSData *currentIndexBuffer = [m_indexArrays objectAtIndex:bufferIndex];

GLushort *indexBuffer = (GLushort *)[currentIndexBuffer bytes];

glBufferData(GL_ELEMENT_ARRAY_BUFFER, [currentIndexBuffer length], indexBuffer, GL_STATIC_DRAW);

m_numberOfIndicesForBuffers[bufferIndex] = ([currentIndexBuffer length] / sizeof(GLushort));

glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, 0);

}

[m_indexArray release];

m_indexArray = nil;

[m_indexArrays release];

for (bufferIndex = 0; bufferIndex < m_numberOfVertexBuffers; bufferIndex++)

{

glGenBuffers(1, &m_vertexBufferHandle[bufferIndex]);

glBindBuffer(GL_ARRAY_BUFFER, m_vertexBufferHandle[bufferIndex]);

NSData *currentVertexBuffer = [m_vertexArrays objectAtIndex:bufferIndex];

GLfixed *vertexBuffer = (GLfixed *)[currentVertexBuffer bytes];

glBufferData(GL_ARRAY_BUFFER, [currentVertexBuffer length], vertexBuffer, GL_STATIC_DRAW);

glBindBuffer(GL_ARRAY_BUFFER, 0);

}

[m_vertexArray release];

m_vertexArray = nil;

[m_vertexArrays release];

This looks quite complex, but let me explain what's going on here. Remember when I mentioned above that indices were limited to a max of 65,536 and that would cause the need for multiple index and vertex arrays? You can see that here. I loop over each vertex and index array and set them up as buffer objects. glGenBuffers() starts things off by creating a new buffer and passing back a handle to that buffer. glBindBuffer() lets you set what type of buffer to use, GL_ELEMENT_ARRAY_BUFFER for indices, or GL_ARRAY_BUFFER for vertices, normals, and colors. I created my arrays using NSData objects, so I grab their bytes for use in glBufferData(), which sets up the transfer of those bytes to the GPU. Note the use of GL_STATIC_DRAW. This hints to the GPU that this vertex buffer object will not change, and allows OpenGL to optimize for that condition. Finally, the vertex buffer object is unbound.

Note that once the geometry has been sent to video RAM, there's no need to keep it around locally, so all NSData objects containing the arrays are released.

Now, you just need to refer to these VBOs every frame when you do your drawing and the geometry data will all be handled locally by the GPU:

glEnableClientState (GL_VERTEX_ARRAY);

glEnableClientState (GL_NORMAL_ARRAY);

glEnableClientState (GL_COLOR_ARRAY);

unsigned int bufferIndex;

for (bufferIndex = 0; bufferIndex < m_numberOfVertexBuffers; bufferIndex++)

{

glBindBuffer(GL_ARRAY_BUFFER, m_vertexBufferHandle[bufferIndex]);

glVertexPointer(3, GL_FIXED, 0, NULL);

glBindBuffer(GL_ARRAY_BUFFER, m_normalBufferHandle[bufferIndex]);

glNormalPointer(GL_FIXED, 0, NULL);

glBindBuffer(GL_ARRAY_BUFFER, m_colorBufferHandle[bufferIndex]);

glColorPointer(4, GL_UNSIGNED_BYTE, 0, NULL);

glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, m_indexBufferHandle[bufferIndex]);

glDrawElements(GL_TRIANGLES,m_numberOfIndicesForBuffers[bufferIndex],GL_UNSIGNED_SHORT, NULL);

glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, 0);

glBindBuffer(GL_ARRAY_BUFFER, 0);

}

glDisableClientState (GL_COLOR_ARRAY);

glDisableClientState (GL_VERTEX_ARRAY);

glDisableClientState (GL_NORMAL_ARRAY);

Again, I loop through the sets of vertex buffer objects I've created. First a buffer is bound from its handle that we had received earlier using glBindBuffer(). Then the type of data contained within that buffer is identified using a function such as glVertexPointer(). Note the use of the NULL as the last argument, where in the vertex array case we had an actual array pointer. That signifies the use of a VBO instead of an array. Finally, we call glDrawElements(), again with a NULL last argument.

As an anecdotal data point, I achieved approximately a 4-5X speedup by switching from vertex arrays to VBOs. Unfortunately, I don't have hard numbers to back this up and that value may be convoluted with other optimizations I was doing at the same time. In any case, you will see a performance boost from going with VBOs. Now, when I run Instruments, the most-called function is glDrawTriangles, indicating that most of the time is being spent by the GPU actually drawing to the screen and not waiting on data to be transmitted.

The downside to the VBO approach is that you will need to do all the geometry calculations yourself to lay out all the vertices, normals, and indices. Also, this example worked really well with the VBO concept, because a molecule is rendered once and then just manipulated. For objects that change their shapes, new geometry will need to be sent every frame. VBOs can still give an advantage here, as only the parts of the VBO that change need to be transmitted. Also, all geometry data will be transferred using DMA, so you will see performance improvements due to that as well.

Rotating the 3-D model from the user's point of view

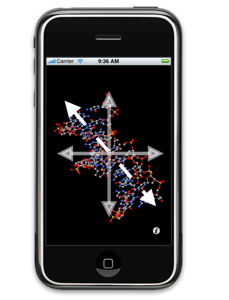

One last trick I wanted to pass along was how I accomplished the rotation of the 3-D molecules using the multitouch interface. Molecules uses the multitouch interface of the iPhone to perform rotation by moving one finger left-right or up-down on the screen, to zoom in and out on the molecule using a pinch gesture, and to pan around the molecule using two fingers moving at once.

Zooming in and out was simply a matter of calculating what the zoom factor was from how far apart your fingers are in the pinch gesture and calling

glScalef(x, y, z);

where x, y, and z are the scale factors in all three axes (1.0 being no change at all, 0.5 being half the size, and 2.0 being double the size).

Likewise, translation was done using

glTranslatef(x, y, z);

where x, y, and z are the amounts by which to offset the object in all three axes.

where x, y, and z are the amounts by which to offset the object in all three axes.

Rotation was the tricky one. Rotation of an object is done by using

glRotatef(angle, x, y, z);

where angle is how much rotation to perform. x, y, and z define the axis about which the model is to rotate. For example (1, 0, 0) means for the object to rotate about the X-axis (imagine a rotisserie chicken). Where this gets tricky is that the axis we're talking about is in the model's coordinate system. When a user swipes his finger across the iPhone screen, he is looking for rotation to occur relative to his perspective, which is different from the model's. After a bit of matrix math, it turns out you can do this conversion using the following:

GLfloat currentModelViewMatrix[16];

glGetFloatv(GL_MODELVIEW_MATRIX, currentModelViewMatrix);

glRotatef(xRotation, currentModelViewMatrix[1], currentModelViewMatrix[5], currentModelViewMatrix[9]);

glGetFloatv(GL_MODELVIEW_MATRIX, currentModelViewMatrix);

glRotatef(yRotation, currentModelViewMatrix[0], currentModelViewMatrix[4], currentModelViewMatrix[8]);

This code grabs the current model view matrix, a 4x4 matrix that describes all the scaling, rotation, and translation that's been applied to the model to this point. It turns out that if you grab the elements of the matrix seen above, you can solve this complex geometry problem with just a couple lines of code. This was a real headache and hopefully will save someone else some time.

One thing to be careful of is the use of the glGetFloatv() functions. I will be removing these from Molecules, because they do lead to a decrease in rendering performance by halting the OpenGL rendering pipeline. This could be part of the reason for the lower benchmark numbers I saw when compared to GLBenchmark's above.

Conclusion

I'd like to place a caveat on all the above by saying that I am a relative newbie when it comes to OpenGL. I may not be explaining concepts properly or may be showing you nonoptimal means of drawing using OpenGL. I welcome any and all comments on this, and it's one of the reasons why I will open source Molecules. I want to learn from the experience of others and make a better, faster product. Also note that no mention was made of textures here, due to their absence in Molecules.

Thanks again go to Chris Foresman at Ars Technica for giving me the chance to share my insights at the iPhoneDevCamp. Hopefully, you will find some of this useful, even without the complete Molecules source code. I'd love to see more programs, especially scientific ones, take full advantage of the iPhone's graphical hardware.